Redis

Cloud Control includes a fully managed Redis cluster with 2 run modes:

- A cluster with read/write support

- A cluster with read-only support which can act as a streaming replica of a read/write cluster

This is the default backend for the following components:

| Component | Userplane | Controlplane |

|---|---|---|

| Dynamic Filtering (several components) | yes |

yes |

Redis cluster types

Cloud Control Redis clusters are deployed in 2 flavors, depending on the usecase:

- Cluster with read/write support

- Cluster with read-only support, intended to be a streaming replica of a cluster with read/write support (ie: a mirror)

Redis cluster with read/write support

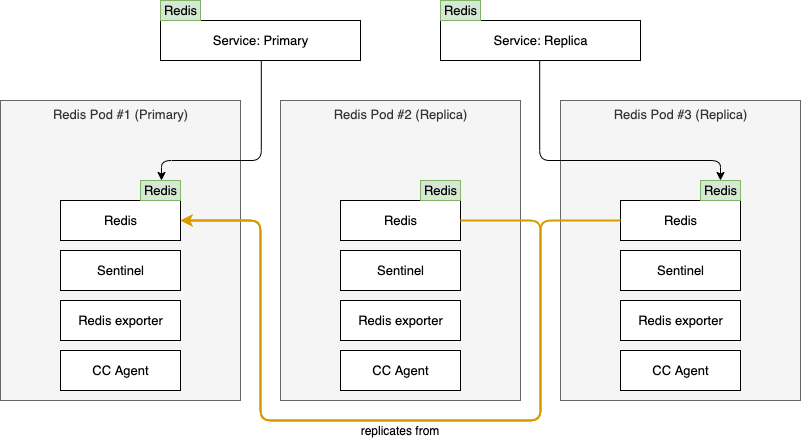

A Redis cluster with read/write support has 3 nodes by default, one of which will be designated as primary and the others as replica. The primary node will support both reads & writes, while the replica nodes will only be available for reads. Determination of which node in the cluster is the primary is managed by Sentinel. Sentinel also makes sure all nodes designated as replica are configured to receive streaming replication from the primary.

Access to the Redis cluster is enabled via 2 services, corresponding to the role of the nodes:

- Primary Service: Exposes the node currently designated as

primary - Replica Service: Exposes a node currently designated as

replica

Note: To facilitate optimal speed of failover in case of a primary failure, the replica traffic is pinned to 1 specific replica node, reserving the other replica node as the next-in-line to become primary. Reason for this is that when a replica is promoted to become the primary, it drops all connections and that is an undesirable scenario if there are other Redis clusters with read-only support (mirrors) replicating from this read/write cluster.

To ensure the Redis cluster is managed and monitored, each Redis Pod contains several containers:

- Redis: Redis runs in this container

- Sentinel: Sentinel is responsible for managing the Redis process and coordinating with Sentinel in the other cluster nodes to determine which node is the

primary - Redis Exporter: Collects Prometheus metrics from Redis

- Cloud Control Agent: Responsible for managing the Redis configuration, exposing metrics and enabling management activities via NATS

This results in a deployment as follows:

As you can see the nodes have the following roles:

- Pod #1:

primary - Pod #2:

replica- but reserved to be the next-in-lineprimary - Pod #3:

replica

Automated failover

To ensure the Redis cluster with read/write support can recover from failures, it always consists of at least 3 nodes. This allows us to ensure that if 1 of the nodes fails or runs on a Kubernetes node undergoing maintenance we can recover and have at least 1 primary and replica left running. The process for failing over is fully managed by Sentinel and always follows these rules:

- The

primaryonly switches when the currentprimaryexperiences a failure - When selecting a

primary, preference is always given to the Redis Pod with the lowest numerical suffix in the Pod name (ie:redis-[Cluster Name]-0) which is not currently thereplicareceiving traffic from thereplicaService - When selecting a

replicato receive traffic from thereplicaService, preference is given to the Redis Pod with the highest numerical suffix in the Pod name (ie:redis-[Cluster Name]-2in a cluster with 3 replicas)

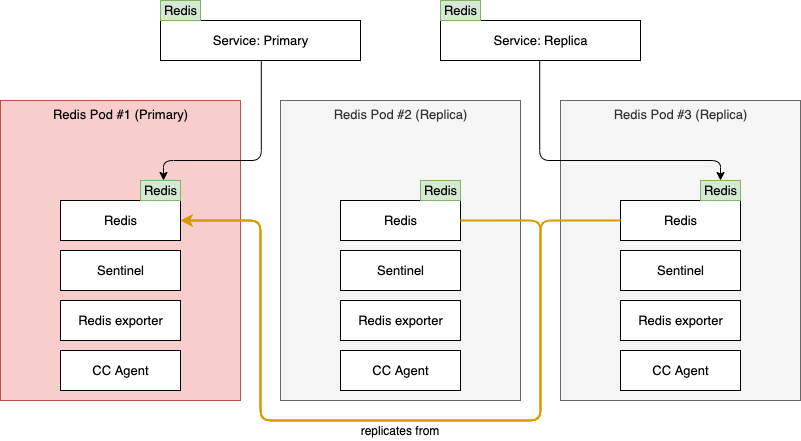

In the below scenario, Pod #1 is currently our primary, but it fails:

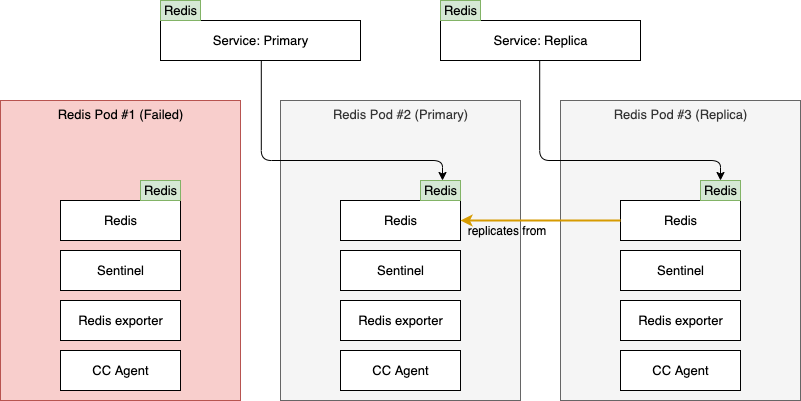

Once Sentinel notices the current primary is no longer in a healthy state, a new primary is elected by the remaining nodes and the Service objects and replication are reconfigured accordingly. Following the previously described rules, the new primary is selected:

When the cause of the failure of Pod #1 has been determined and resolved, it can rejoin the cluster. It will do so as replica, since another node took over the role of primary. The cluster will now look like this:

From this point onwards the same rules are continued to be applied when there is another node failure:

- If Pod #1 fails: No action, this Pod is not currently a

primaryand is not thereplicareceiving traffic from thereplicaService - If Pod #2 fails: This Pod is

primary, so the Redis Pod which is healthy and has the lowest numerical suffix while not being thereplicareceiving traffic from thereplicaService is elected to be the newprimary: Pod #1 - If Pod #3 fails: This is the

replicareceiving traffic from thereplicaService, so the Redis Pod which is healthy and has the highest numerical suffix while not being theprimaryis selected to be the nextreplicato receive traffic from thereplicaService: Pod #1

Data Persistency

Persistency of data stored in the Redis cluster with read/write support is supported by 2 mechanisms:

replicanodes are constantly replicating from theprimaryto ensure aprimaryfailure can be mitigated without data loss- Pods are deployed as part of a StatefulSet with a PersistentVolume on which the Redis data is stored to ensure the data can withstand a restart of the Pod

Backup & Restore

Backup & restore functionality is currently not supported for the Redis clusters. The current usecases for Redis within Cloud Control are not suitable for a backup & restore scenario as there is an alternative available that provides a better result by using the Cloud Control API to rebuild the data in Redis based on the data stored in the Postgres database used by dynamic filtering.

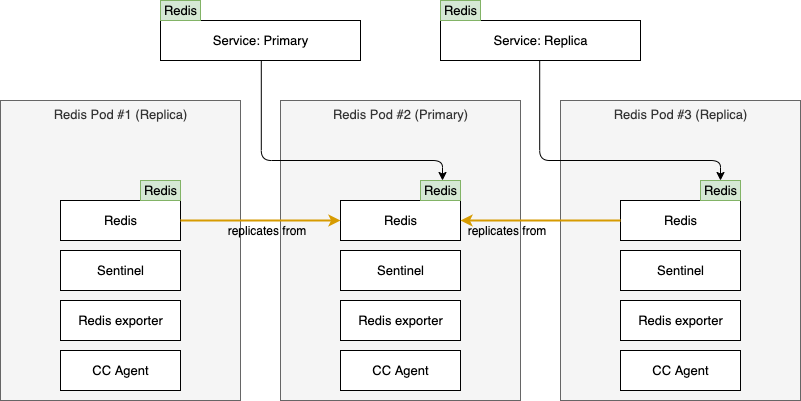

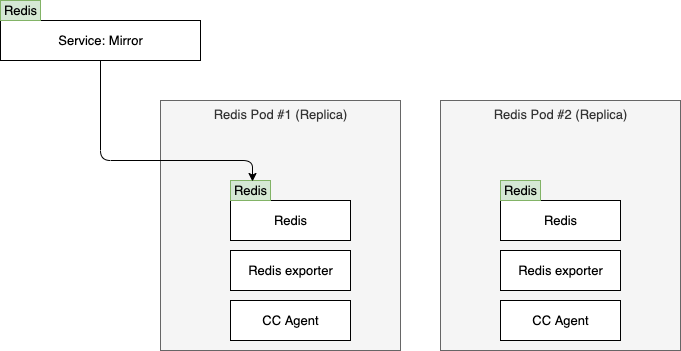

Redis cluster with read-only support (mirror)

A Redis cluster with read-only support (mirror) is a much more simple deployment than the read/write version. By default it only consists of 2 Pods, both configured as a replica which replicate from the replica Service of a Redis cluster with read/write support. This read-only cluster has a single Service object, named mirror to which applications can connect. Since there is no need for primary election, there is no Sentinel container in these Pods.

If we leave out the replication to the read/write cluster, a mirror deployment looks as follows:

Note: By default a Redis mirror deployment has the mirror Service configured to point to a single replica until it fails. The other replica is the next-in-line to become the recipient of the mirror Service. This is done to guarantee a stable sticky connection to all applications which access the mirror Service, while also guaranteeing a successor is ready if the current recipient of the mirror Service fails.

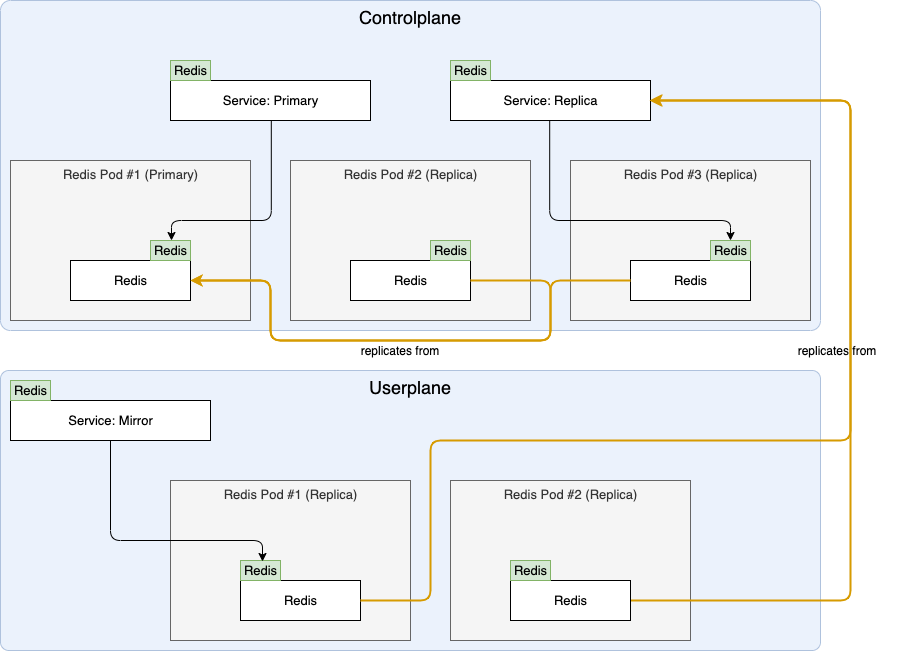

To illustrate the replication from the read/write cluster and the usecase of having these mirrors, the following diagram is useful:

The deployment in above diagram is how Userplane deployments with dynamic filtering obtain the necessary filtering details from the Controlplane. Both replica Pods in the Userplane constantly replicate from the replica Service in the parent read/write cluster in the Controlplane.

Data Persistency

Persistency of data stored in the Redis cluster with read-only support is supported by 2 mechanisms:

- Multiple

replicanodes are constantly replicating from the parent read/write cluster to ensure a failure of the current recipient of themirrorService can be mitigated without data loss - Pods are deployed as part of a StatefulSet with a PersistentVolume on which the Redis data is stored. If a Pod fails and is later recovered, it can often use the local copy as a starting point to avoid having to replicate the entire dataset from the parent read/write cluster

Backup & Restore

Since the read-only deployment does not have any notion of a primary, it is also not eligible for a sensible backup & restore setup. If a read-only cluster ever fails completely, it will automatically re-replicate from the parent read/write cluster once it recovers.

Configuration Reference

Redis clusters can be configured as nested items of the Cloud Control component which requires it. For example, to configure the Redis read/write cluster deployed as part of the Dynamic Filtering deployment:

dynamic:

redis:

tls:

enabled: true

certManager: true

resources:

limits:

cpu: 2

memory: 8Gi

pvc:

template:

resources:

requests:

storage: 60Gi

Above Redis read/write cluster will have:

- TLS enabled, with a Certificate requested from CertManager

- Redis container resource limits set at 2 CPUs and 8 Gi RAM

- Persistent volumes for each Pod with a capacity of 60 Gi

Configuration for a Redis read-only (mirror) cluster is similar, but requires a few extra items to point it to the desired parent Redis cluster with read/write support. For example, a configuration of the Redis read-only cluster used by a Userplane Dynamic filtering filtersettings object named testdynamic:

filterSettings:

testdynamic:

dynamic:

enabled: true

redis:

mirror:

host: "redis-dynamic-replica.controlplane.svc"

passwordSecretName: redis-replica-credentials-for-mirror

resources:

limits:

cpu: 2

memory: 8Gi

pvc:

template:

resources:

requests:

storage: 60Gi

Above read-only (mirror) cluster will:

- Replicate from the

replicaService of a Redis read/write cluster via the endpointredis-dynamic-replica.controlplane.svc, authenticating with the credentials stored in Secretredis-replica-credentials-for-mirror - Redis container resource limits set at 2 CPUs and 8 Gi RAM

- Persistent volumes for each Pod with a capacity of 60 Gi

Since there are 2 variations of Redis configuration, the reference has been split into the following parts:

- Generic: Can be applied to all Redis configurations

- Read-write configuration: Applied only to read-write Redis configurations

- Read-only configuration: Applied only to read-only Redis configurations

Generic

The full list of parameters which can be used on all Redis configurations:

| Parameter | Type | Default | Description |

|---|---|---|---|

affinity |

k8s:Affinity |

pod affinity (Kubernetes docs: Affinity and anti-affinity). If unset, a default anti-affinity is applied using antiAffinityPreset to spread pods across nodes |

|

antiAffinityPreset |

string |

"required" |

pod anti affinity preset. Available options: "preferred" "required" |

agentHeartbeat |

integer |

5 |

How often the agent should run its heartbeat routine, responsible for making sure all Redis pods have the correct primary and replica tags. The lower this value, the faster a failover can occur if failure is detected |

agentLogLevel |

string |

"info" |

Verbosity of logging for the agent container. Available options: "debug" "info" "warn" "error" |

config |

dictionary |

|

Set of key:value pairs to override Redis configuration parameters. Entries under config will be merged into the defaults |

hostNetwork |

boolean |

false |

Use host networking for pods |

logLevel |

string |

"info" |

Level of logging. Available options: "DEBUG" "NOTICE" "VERBOSE" "WARNING" |

nodeSelector |

k8s:NodeSelector |

{} |

Kubernetes pod nodeSelector |

passwordSecretName |

string |

Name of a pre-existing Kubernetes Secret containing a password to be set as Redis password for this cluster. If this is omitted a random password is generated and stored in a Secret. |

|

passwordSecretKey |

string |

"password" |

Name of the item in the passwordSecretName Secret holding the password |

podAnnotations |

k8s:Annotations |

{} |

Annotations to be added to each pod |

podLabels |

k8s:Labels |

{} |

Labels to be added to each pod |

podSecurityContext |

k8s:PodSecurityContext |

|

SecurityContext applied to each pod |

pvc |

Persistent Volume | |

Configuration of the Volume Claim template to request your storage provisioner to provide the Redis Pods with appropriate persistent storage volumes |

replicas |

integer |

Read-write: 3Read-only: 2 |

Default number of replicas in the StatefulSet |

resources |

k8s:Resources |

|

Resources allocated to the redis container if resourceDefaults (global) is true |

tls |

TLS | |

TLS configuration for inbound Redis traffic |

tolerations |

List of k8s:Tolerations |

[] |

Kubernetes pod Tolerations |

topologySpreadConstraints |

List of k8s:TopologySpreadConstraint |

[] |

Kubernetes pod topology spread constraints |

Read-write configuration

The full list of parameters which can be used only on Redis read-write configurations:

| Parameter | Type | Default | Description |

|---|---|---|---|

primaryService |

Service | |

Configuration of the Service exposing the primary node |

replicaService |

Service | |

Configuration of the Service exposing the replica node |

Read-only configuration

The full list of parameters which can be used only on Redis read-only configurations:

| Parameter | Type | Default | Description |

|---|---|---|---|

mirrorService |

Service | |

Configuration of the Service exposing the elected replica node |

mirror |

Mirror upstream | {} |

Configuration of the upstream read-write cluster from which this read-only cluster will replicate |

Mirror upstream

Configuration parameters to configure the upstream from which this read-only cluster will attempt to replicate:

| Parameter | Type | Required | Default | Description |

|---|---|---|---|---|

caSecretName |

string |

Name of a pre-existing Kubernetes Secret with a data item named ca.crt containing the CA in PEM format to use for validation in connections towards the upstream read-write cluster if it has TLS enabled |

||

host |

string |

yes |

Hostname of the upstream read-write cluster to connect to | |

passwordSecretName |

string |

yes |

Name of a pre-existing Kubernetes Secret containing a password for authentication with the upstream | |

passwordSecretKey |

string |

"password" |

Name of the item in the passwordSecretName Secret holding the password |

|

port |

integer |

6379 |

Port of the upstream read-write cluster to connect to |

Persistent Volume

To configure the Persistent Volume requested for each Redis Pod you can modify the following parameters inside the pvc node:

<parent>:

redis:

pvc:

labels:

my.prefix/labelname: labelvalue

annotations:

my.prefix/annoname: annovalue

template:

resources:

requests:

storage: 40Gi

These parameters can be configured as follows:

| Parameter | Type | Default | Description |

|---|---|---|---|

annotations |

k8s:Annotations |

{} |

Annotations to be added to the StatefulSet's volume claim template |

template |

k8s:PersistentVolumeClaimSpec |

{} |

Spec of PersistentVolumeClaim. Likely needs to be configured to ensure it complies with the expectations of the storage providers on your Kubernetes cluster. In addition, ensure the requested amount of storage is large enough for your usecase. |

labels |

k8s:Labels |

{} |

Labels to be added to the StatefulSet's volume claim template |

Scaling persistent volume after initial creation

The configuration options listed above are applied only on the initial deployment. Kubernetes does not allow for modification of a persistent volume via the Stateful Set after it has been provisioned.

To modify the persistent volume (for example, to modify the size) after the initial deployment, you will need to scale it using the methodology that is applicable to the storage provisioner used to provision the storage.

Service

Parameters to configure the service objects. For example in a read-write deployment:

<parent>:

redis:

primaryService:

type: LoadBalancer

annotations:

metallb.universe.tf/address-pool: name_of_pool

replicaService:

type: LoadBalancer

annotations:

metallb.universe.tf/address-pool: name_of_pool

And in a read-only deployment:

<parent>:

redis:

mirrorService:

type: LoadBalancer

annotations:

metallb.universe.tf/address-pool: name_of_pool

| Parameter | Type | Default | Description |

|---|---|---|---|

allocateLoadBalancerNodePorts |

boolean |

true |

If true, services with type LoadBalancer automatically assign NodePorts. Can be set to false if the LoadBalancer provider does not rely on NodePorts |

annotations |

k8s:Annotations |

{} |

Annotations for the service |

clusterIP |

string |

Static cluster IP, must be in the cluster's range of cluster IPs and not in use. Randomly assigned when not specified. | |

clusterIPs |

List of string |

List of static cluster IPs, must be in the cluster's range of cluster IPs and not in use. | |

externalIPs |

List of string |

List of IP addresses for which nodes in the cluster will also accept traffic for this service. These IPs are not managed by Kubernetes and must be user-defined on the cluster's nodes | |

externalTrafficPolicy |

string |

Cluster |

Can be set to Local to let nodes distribute traffic received on one of the externally-facing addresses (NodePort and LoadBalancer) solely to endpoints on the node itself |

healthCheckNodePort |

integer |

For services with type LoadBalancer and externalTrafficPolicy Local you can configure this value to choose a static port for the NodePort which external systems (LoadBalancer provider mainly) can use to determine which node holds endpoints for this service |

|

internalTrafficPolicy |

string |

Cluster |

Can be set to Local to let nodes distribute traffic received on the ClusterIP solely to endpoints on the node itself |

ipv4 |

boolean |

false |

If true, force the Service to include support for IPv4, ignoring globally configured IP Family settings and/or cluster defaults. If ipv4 is set to true and ipv6 remains false, the result will be an ipv4-only SingleStack Service. If both are false, global settings and/or cluster defaults are used. If both are true, a PreferDualStack Service is created |

ipv6 |

boolean |

false |

If true, force the Service to include support for IPv6, ignoring globally configured IP Family settings and/or cluster defaults. If ipv6 is set to true and ipv4 remains false, the result will be an ipv6-only SingleStack Service. If both are false, global settings and/or cluster defaults are used. If both are true, a PreferDualStack Service is created |

labels |

k8s:Labels |

{} |

Labels to be added to the service |

loadBalancerIP |

string |

Deprecated Kubernetes feature, available for backwards compatibility: IP address to attempt to claim for use by this LoadBalancer. Replaced by annotations specific to each LoadBalancer provider |

|

loadBalancerSourceRanges |

List of string |

If supported by the LoadBalancer provider, restrict traffic to this LoadBalancer to these ranges | |

loadBalancerClass |

string |

Used to select a non-default type of LoadBalancer class to ensure the appropriate LoadBalancer provisioner attempt to manage this LoadBalancer service | |

publishNotReadyAddresses |

boolean |

false |

Service is populated with endpoints regardless of readiness state |

sessionAffinity |

string |

None |

Can be set to ClientIP to attempt to maintain session affinity. |

sessionAffinityConfig |

k8s:SessionAffinityConfig |

{} |

Configuration of session affinity |

type |

string |

ClusterIP |

Type of service. Available options: "ClusterIP" "LoadBalancer" "NodePort" |

TLS

Parameters to configure TLS for inbound traffic. An example:

In the above example the certificate present in Secret my-cluster-certificate will be attempted to be used to start a TLS-enabled listener.

| Parameter | Type | Default | Description |

|---|---|---|---|

certSecretName |

string |

Name of a Secret object containing a certificate (must contain the tls.key, tls.crt items) |

|

certManager |

boolean |

false |

Toggle to have a request created for Certmanager to provision a certificate. By default, this will request for a Certificate covering the following (read-write): - redis-[Name of cluster]-primary- redis-[Name of cluster]-primary.[Namespace]- redis-[Name of cluster]-primary.[Namespace].svc- redis-[Name of cluster]-replica- redis-[Name of cluster]-replica.[Namespace]- redis-[Name of cluster]-replica.[Namespace].svcFor a read-only cluster the following is automatically requested: - redis-[Name of cluster]-mirror- redis-[Name of cluster]-mirror.[Namespace]- redis-[Name of cluster]-mirror.[Namespace].svcAdditional entries can be configured using extraDNSNames |

enabled |

boolean |

false |

Toggle to enable TLS If set to true, a certSecretName must be set or certManager must be set to true to ensure a valid certificate is available |

extraDNSNames |

List of string |

[] |

List of additional entries to be added to the Certificate requested from Certmanager |

issuerGroup |

string |

"cert-manager.io" |

Group to which issuer specified under issuerKind belongsDefault value is inherited from the global certManager configuration |

issuerKind |

string |

"ClusterIssuer" |

Type of Certmanager issuer to request a Certificate from Default value is inherited from the global certManager configuration |

issuerName |

string |

"" |

Name of the issuer from which to request a Certificate Default value is inherited from the global certManager configuration |

certSpecExtra |

CertificateSpec | {} |

Extra configuration to be injected into the Certmanager Certificate object's spec field.Disallowed options: "secretName" "commonName" "dnsNames" "issuerRef" (These are configured automatically and/or via other options) |

certLabels |

k8s:Labels |

{} |

Extra labels for the Certmanager Certificate object |

certAnnotations |

k8s:Annotations |

{} |

Extra annotations for the Certmanager Certificate object |